|

Aleksandar Gavrić

I am a PhD researcher at TU Wien, working at the

Business Informatics Group under

Prof. Dominik Bork and Prof. Henderik Proper.

|

|

ResearchI'm interested in conceptual modeling, world-model construction, computer vision, deep learning, generative AI, multimodal representation learning, and differentiable algorithms for discovering, understanding and simulating real-world processes. Some papers are highlighted. |

|

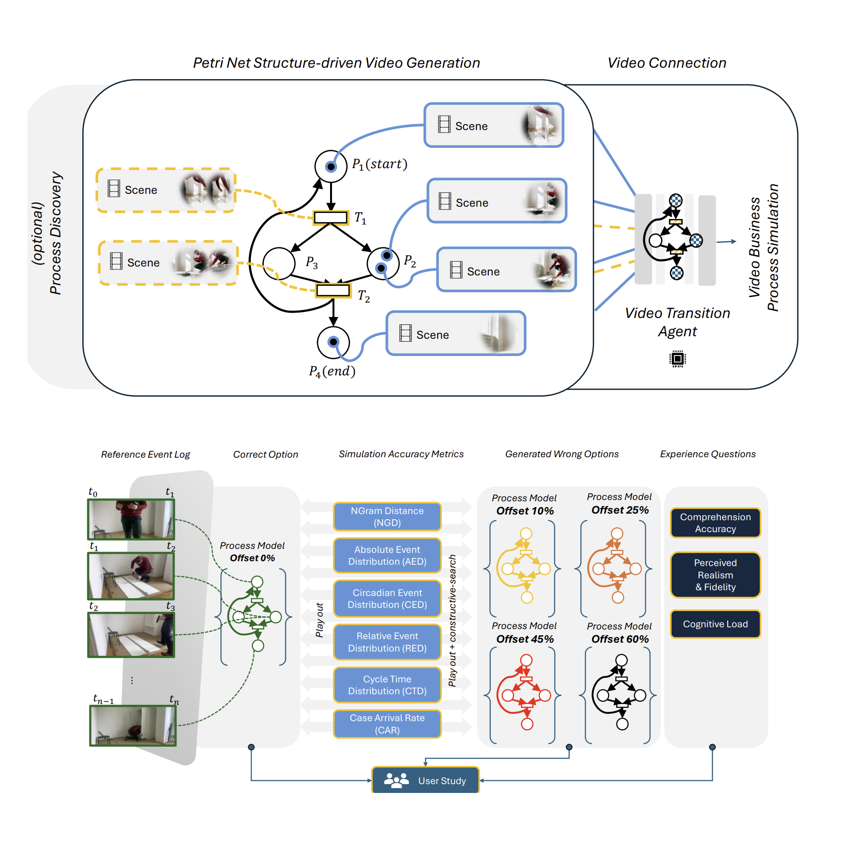

Petri Net Structure-Driven Video Generation

Aleksandar Gavric, Dominik Bork, Henderik A. Proper NeurIPS Workshop "What Makes a Good Video", 2025 [BibTeX] Built a structure-conditioned video generation pipeline using Petri nets as causal scaffolding for diffusion models. |

|

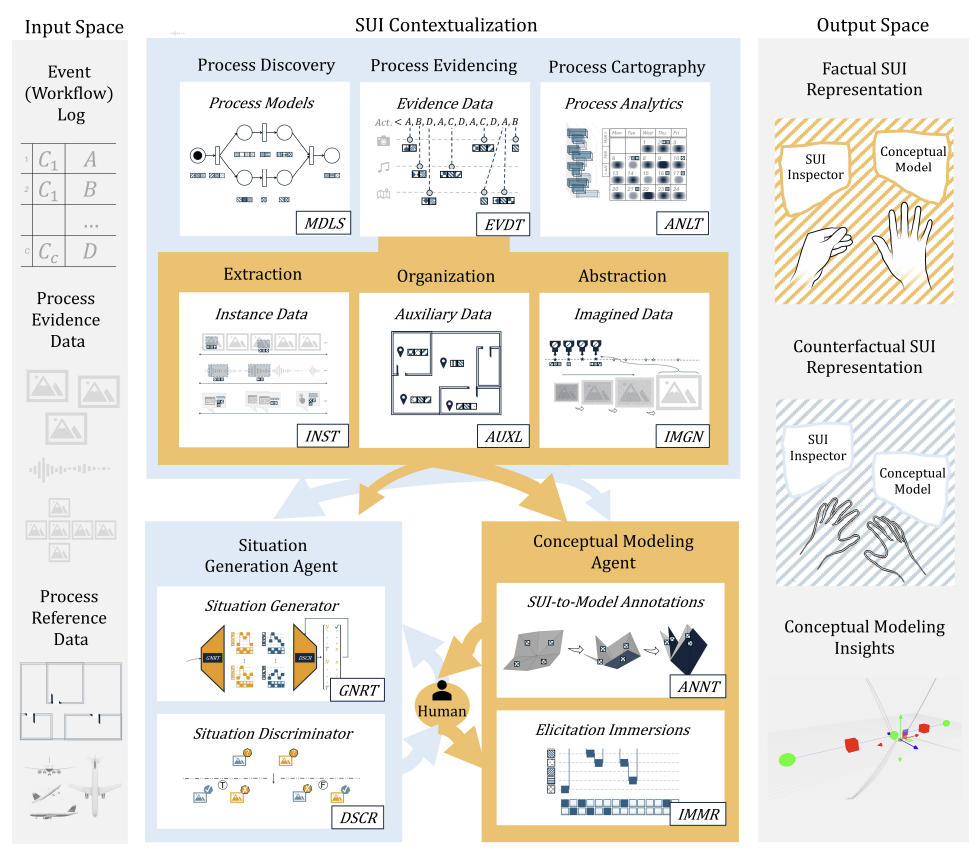

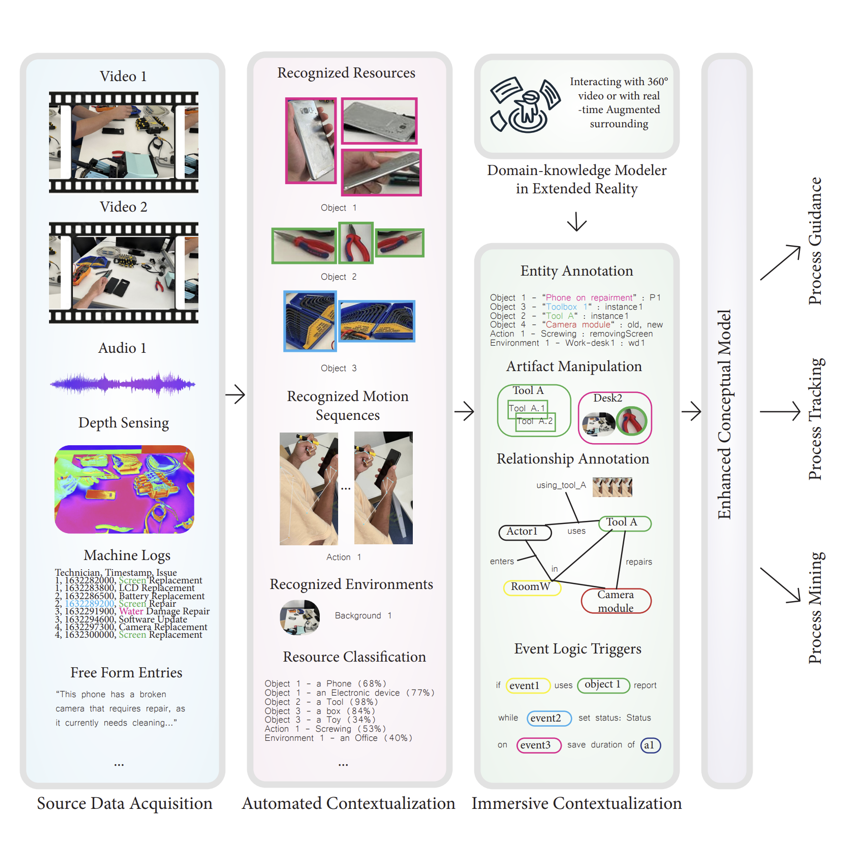

Enhancing Conceptual Modeling through Multimodal Data Analysis and Mixed Reality

Aleksandar Gavric Doctoral Dissertation A unified research framework integrating multimodal process mining with mixed-reality elicitation. The thesis models how real-world work unfolds by combining video, audio, interaction logs, sensor data, and immersive MR simulations to extract tacit expertise and build next-generation conceptual models. |

|

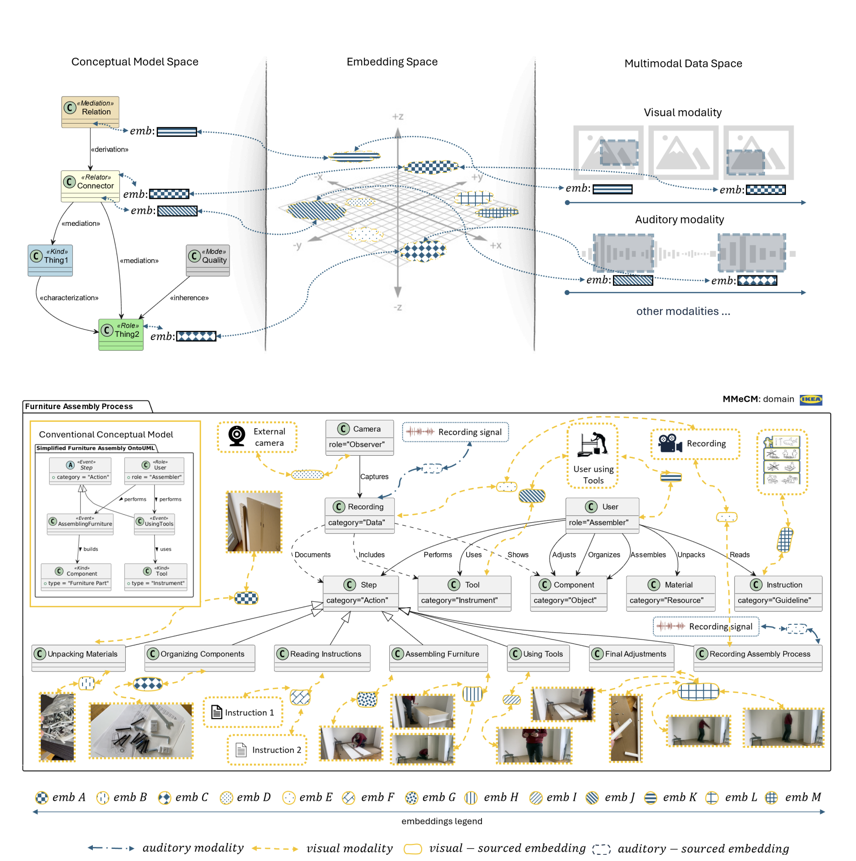

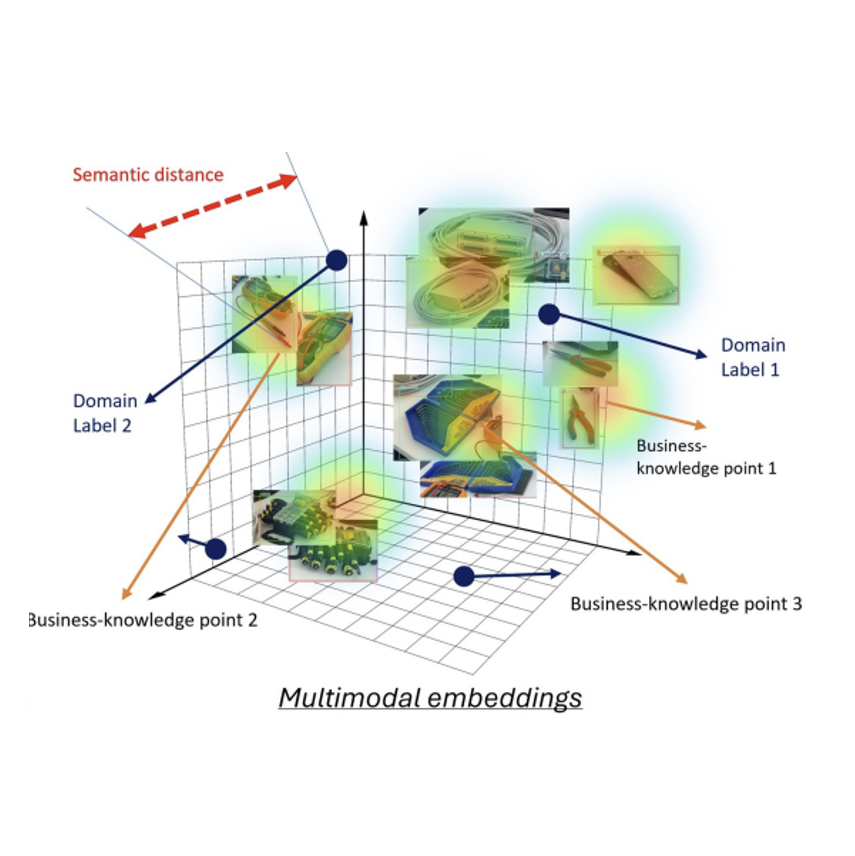

Towards the Enrichment of Conceptual Models with Multimodal Data

Aleksandar Gavric, Dominik Bork, Henderik A. Proper ISD 2025 [BibTeX] Proposed embedding-based multimodal fusion and crossmodal alignment to enrich conceptual models. |

|

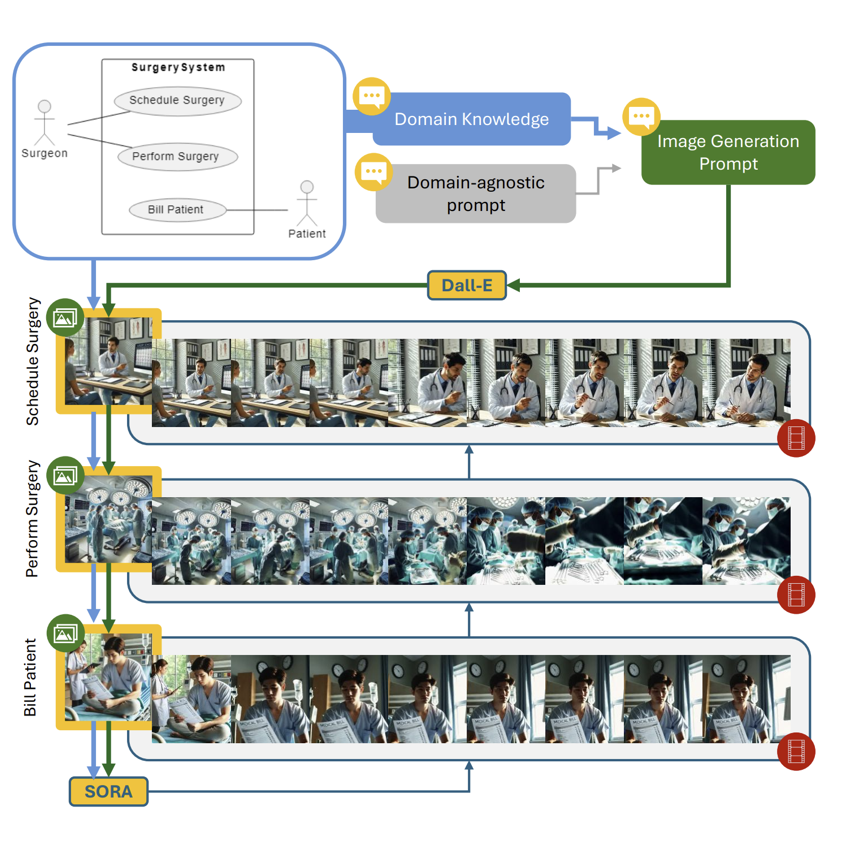

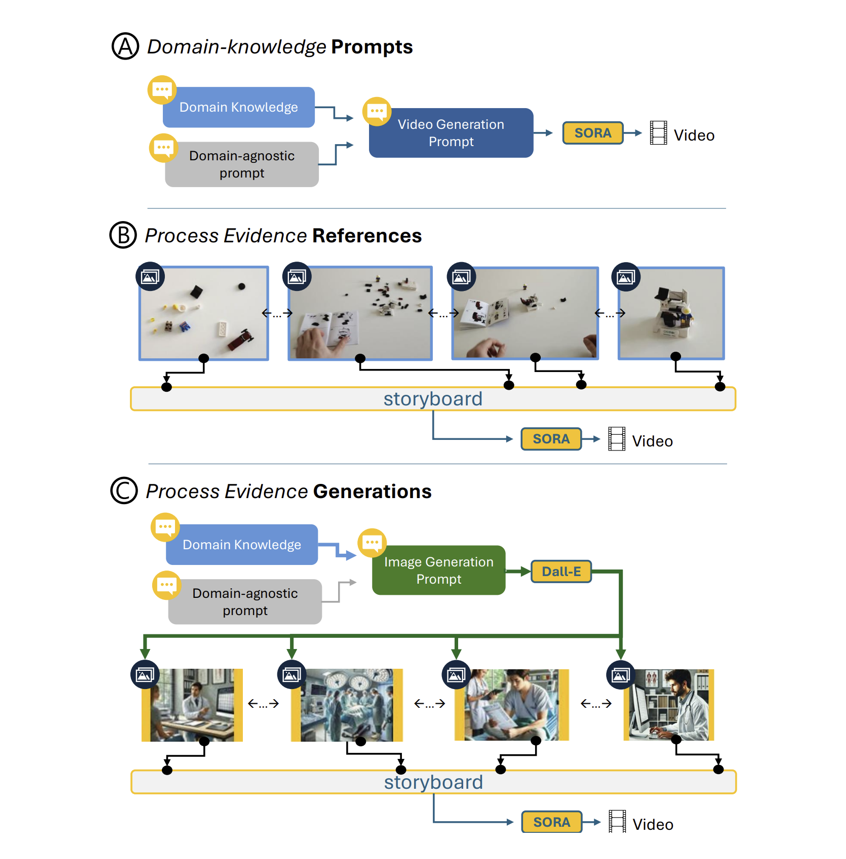

Turning Process Models into Videos

Aleksandar Gavric, Dominik Bork, Henderik A. Proper CBI 2025 [BibTeX] Converts BPMN models into executable synthetic video animations for automated evaluation. |

|

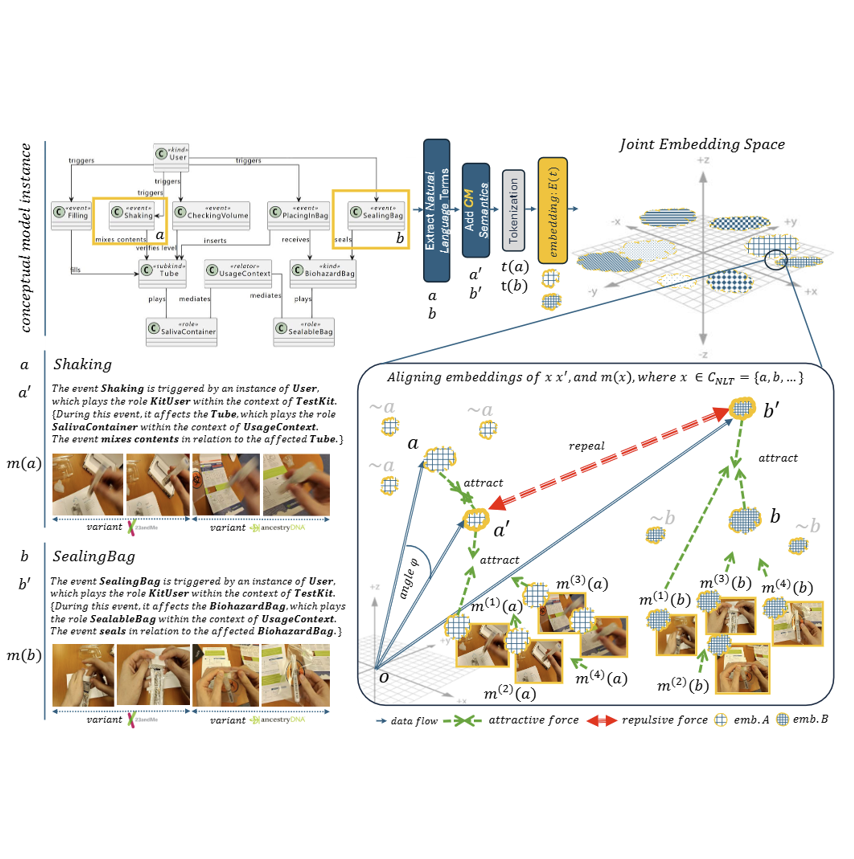

Aligning AI Model’s Knowledge and Conceptual Model’s Symbols

Aleksandar Gavric, Dominik Bork, Henderik A. Proper [BibTeX] Proposes an alignment framework that tunrs multimodal embeddings into formal conceptual model symbols, enabling explainability, traceability, and hybrid reasoning in mixed human–AI modeling workflows. |

|

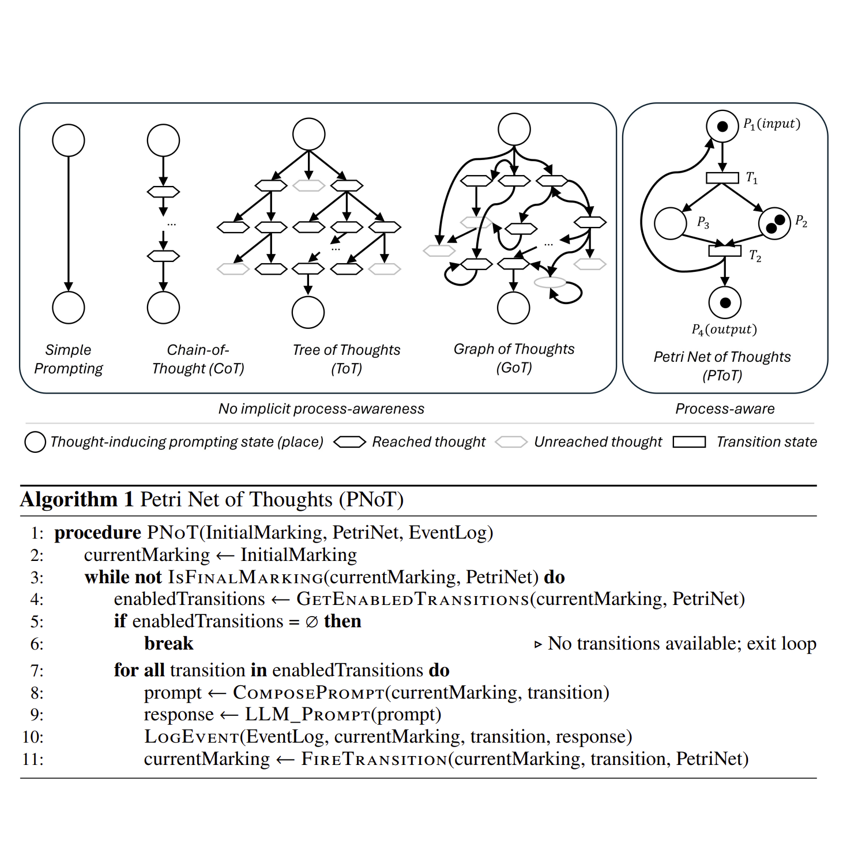

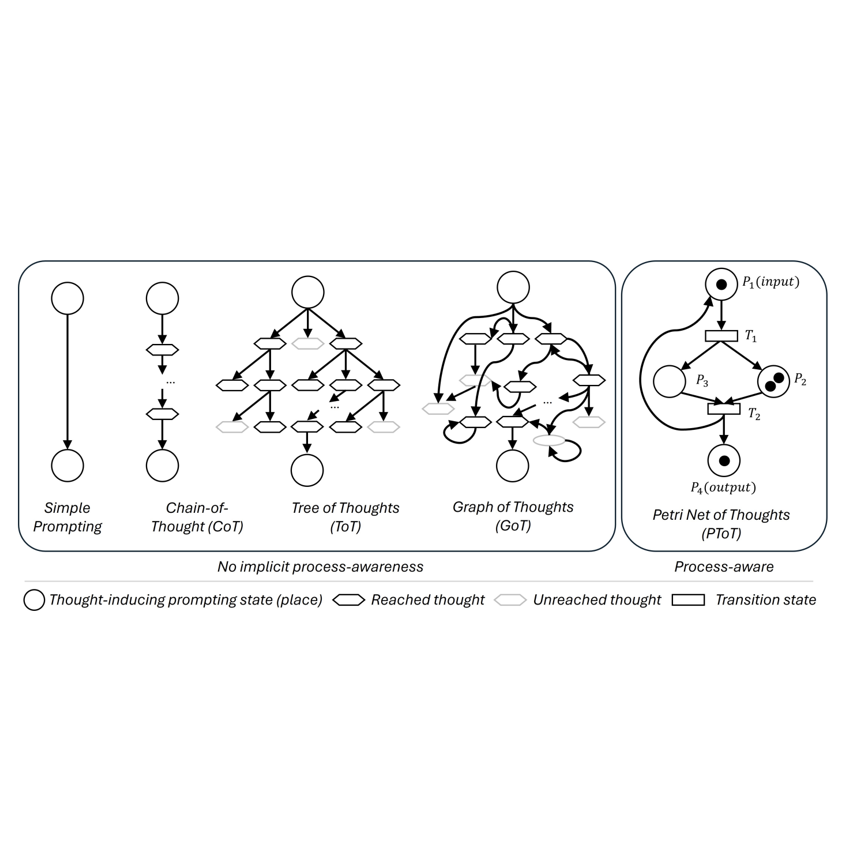

Petri Net of Thoughts: A Structure-Enhanced Prompting Approach for Process-Aware AI

Aleksandar Gavric, Dominik Bork, Henderik A. Proper EMISA 2025 [BibTeX] Introduced a Petri net–guided prompting paradigm improving process-aware reasoning. |

|

Beyond Logs: AI’s Internal Representations as the New Process Evidence

Aleksandar Gavric, Dominik Bork, Henderik A. Proper BPM 2025 [BibTeX] Defined process mining on latent AI representations; introduced relaxed discovery and conformance checking. |

|

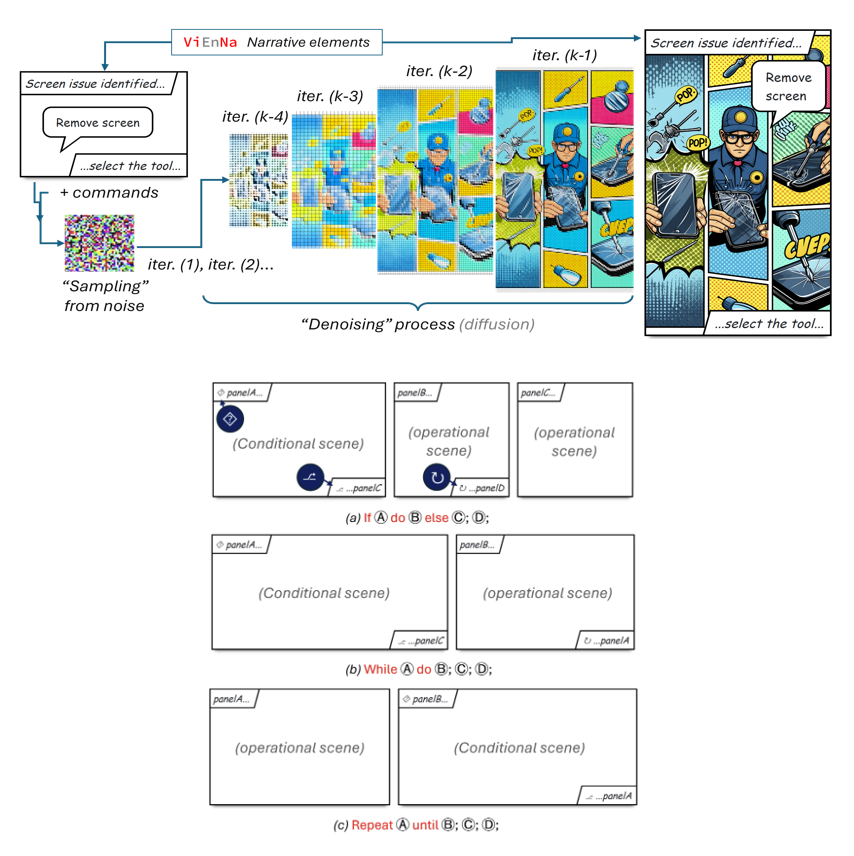

Comics as Process Model Notation: Blending Object-Centric Event Logs and Multimodal Data in Visually Enhanced Narratives

Aleksandar Gavric, Dominik Bork, Henderik A. Proper [BibTeX] Introduces ViEnNa comics as a process-model notation that combines object-centric event logs and multimodal evidence into narrative visual diagrams enabling richer, more intuitive process understanding. |

|

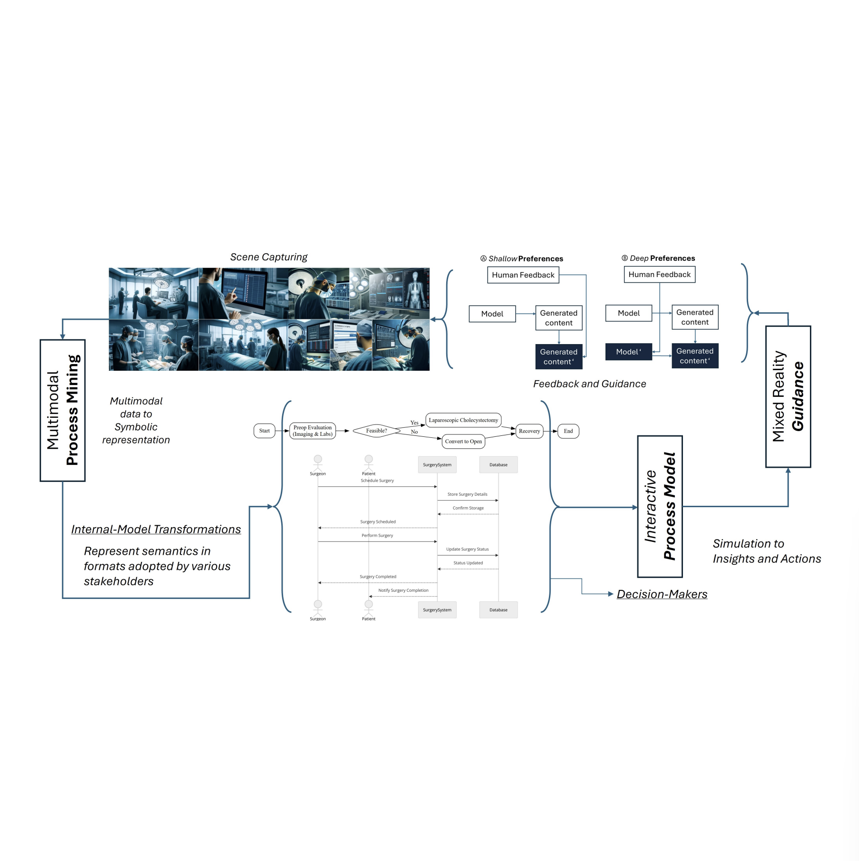

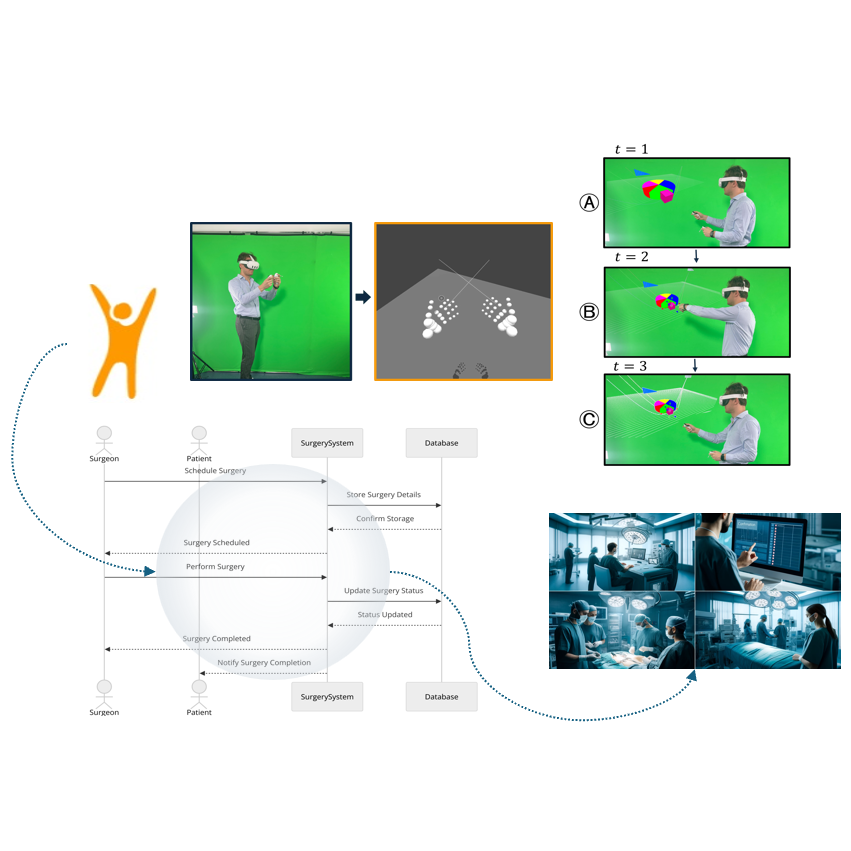

Surgery AI: Multimodal Process Mining & Mixed Reality

Aleksandar Gavric, Dominik Bork, Henderik A. Proper ZEUS 2025 [BibTeX] Real-time surgical multimodal process mining and MR-guided conformance checking. |

|

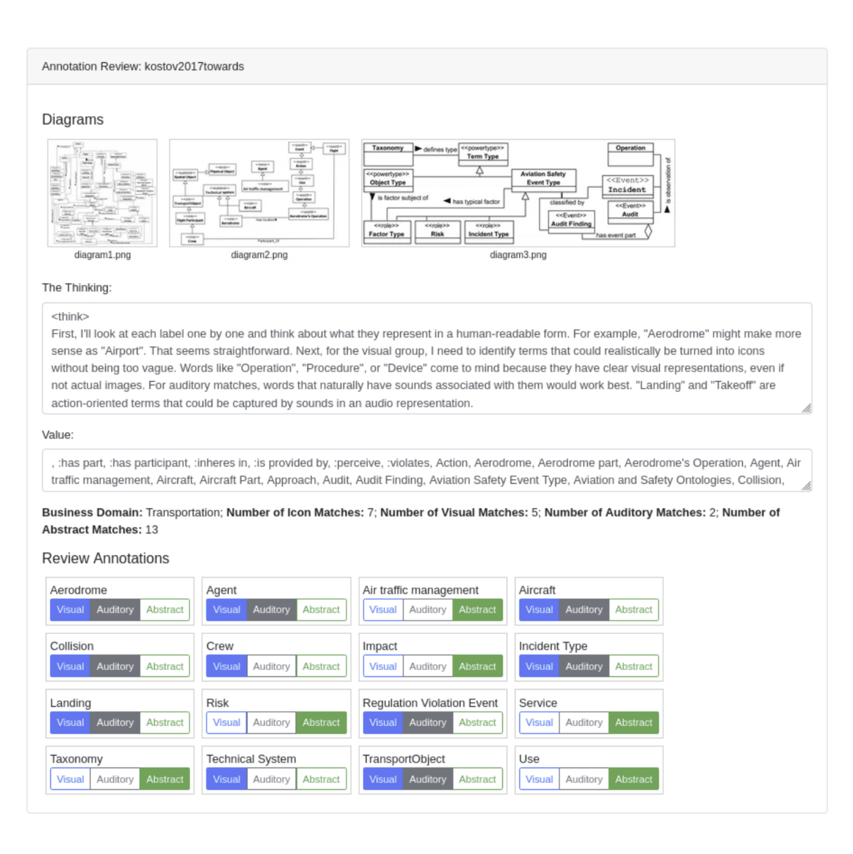

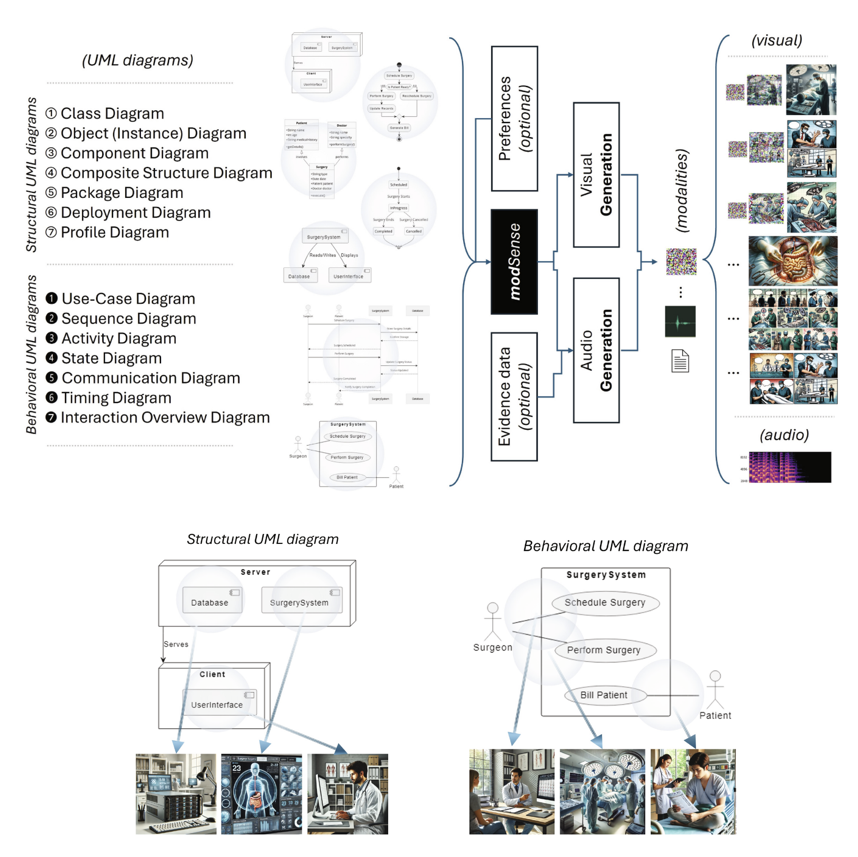

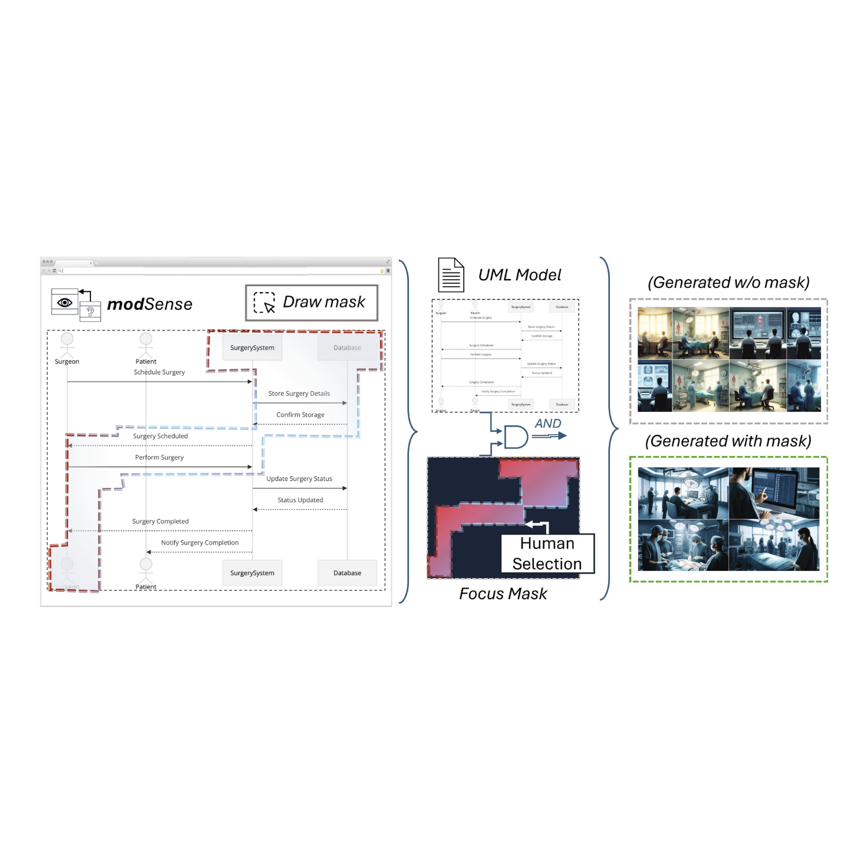

How Does UML Look and Sound? Using AI to Interpret UML Diagrams Through Multimodal Evidence

Aleksandar Gavric, Dominik Bork, Henderik A. Proper ER 2024 Workshops [BibTeX] Applied vision–language models to relate UML diagram elements with synthetic visual or acoustic evidence; introduced a user study linking UML fragments to verbal descriptions and observed interactions. |

|

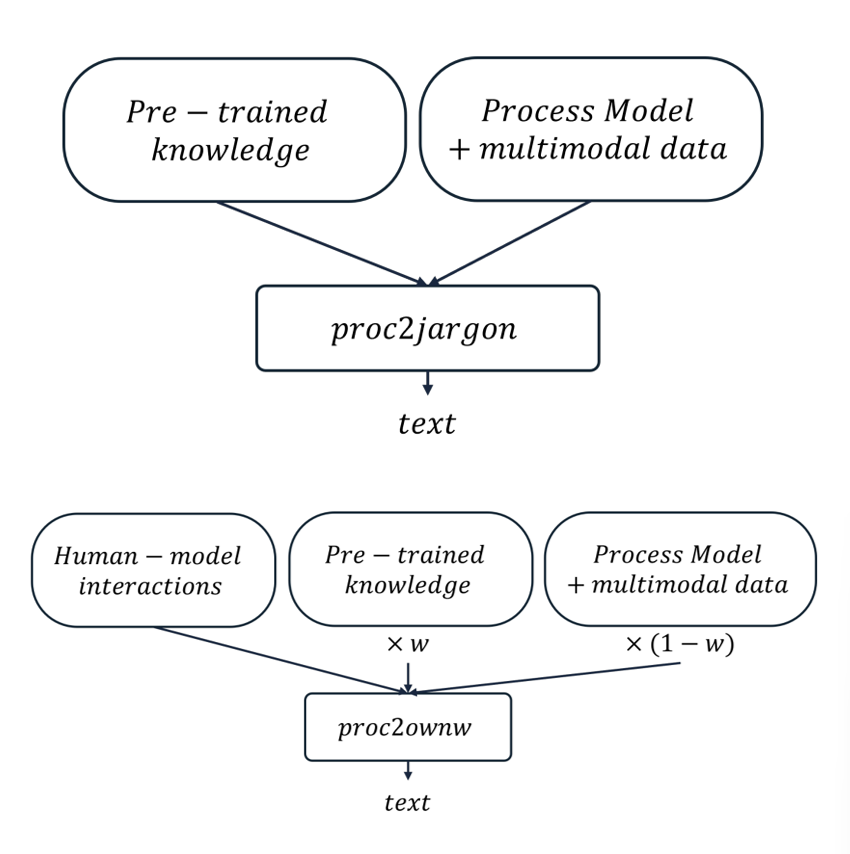

Stakeholder-specific jargon-based representation of multimodal data within business process

Aleksandar Gavric, Dominik Bork, Henderik A. Proper PoEM 2024 (Companion Proceedings) [BibTeX] Designed NLP pipelines that translate multimodal observations into stakeholder-specific jargon, enabling domain-adaptive representation and improved explainability. |

|

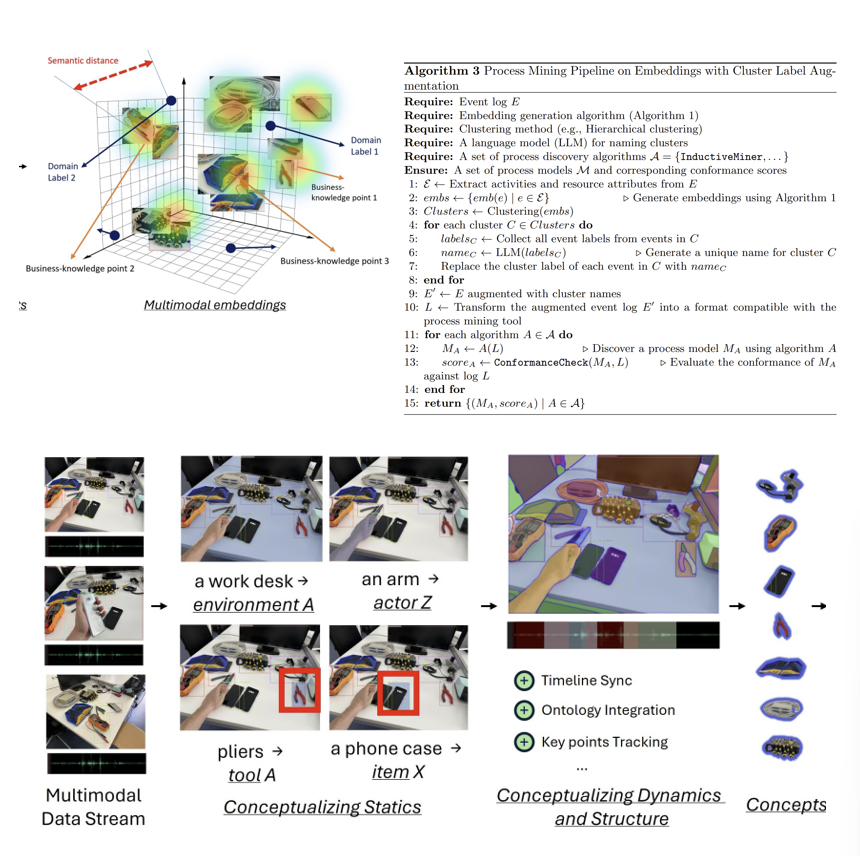

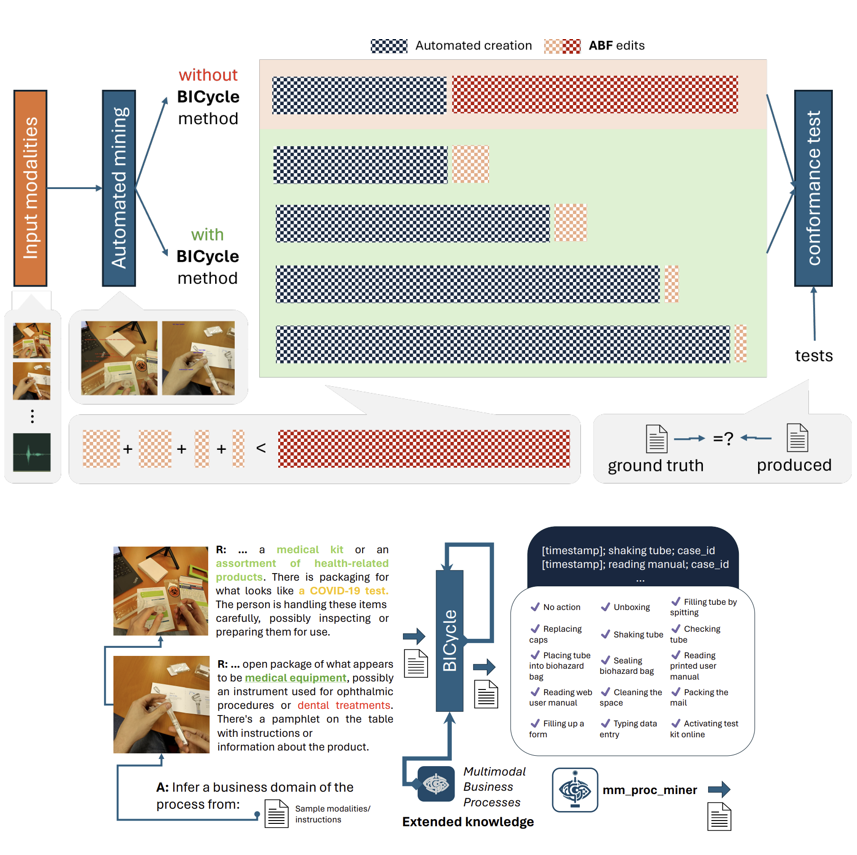

Enriching Business Process Event Logs with Multimodal Evidence

Aleksandar Gavric, Dominik Bork, Henderik A. Proper PoEM 2024 [BibTeX] Augmented traditional event logs with multimodal signals; developed crossmodal encoders to infer missing or tacit activities. |

|

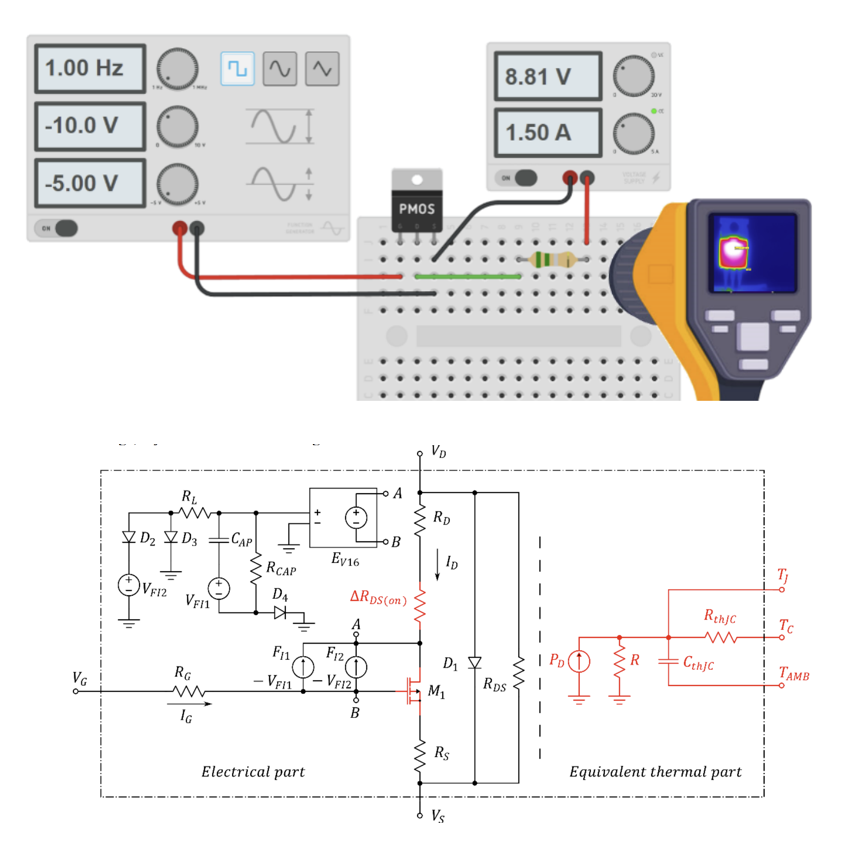

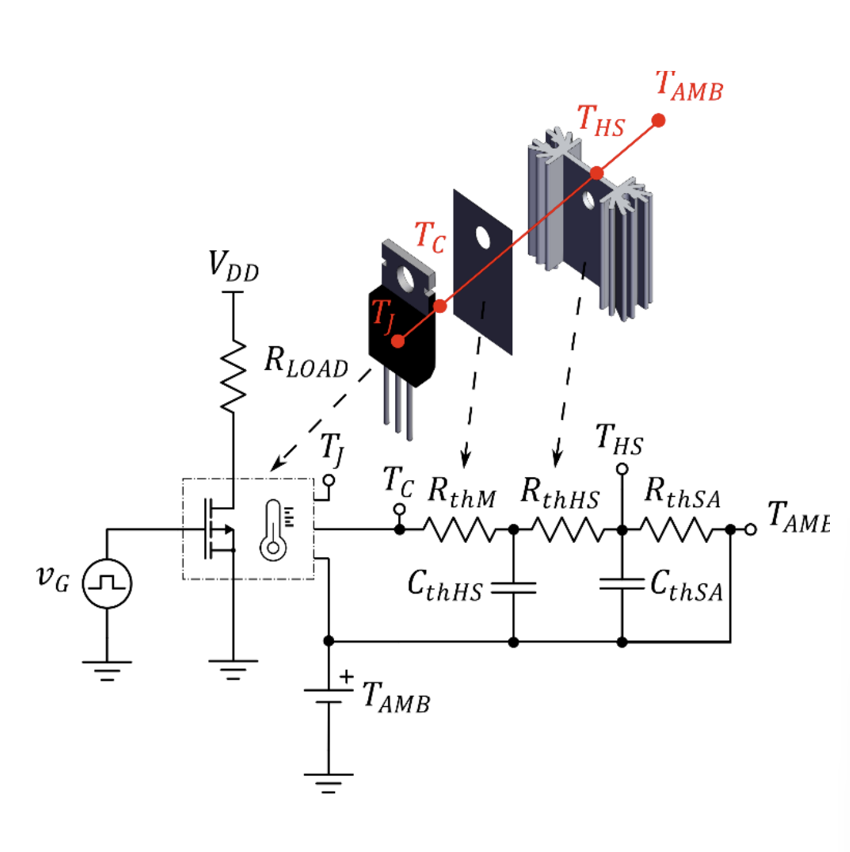

Modified SPICE-Compatible Model Integrating NBTI and Self-Heating Effects for VDMOS Transistors

Marjanović, M., Veljković, S., Mitrović, N., Živanović, E., Aleksandar Gavric, & Danković, D. IcETRAN 2024 [BibTeX] Built physics-informed models predicting transistor degradation; combined simulation data with ML-supported curve-fitting for accuracy under thermal and stress conditions. |

|

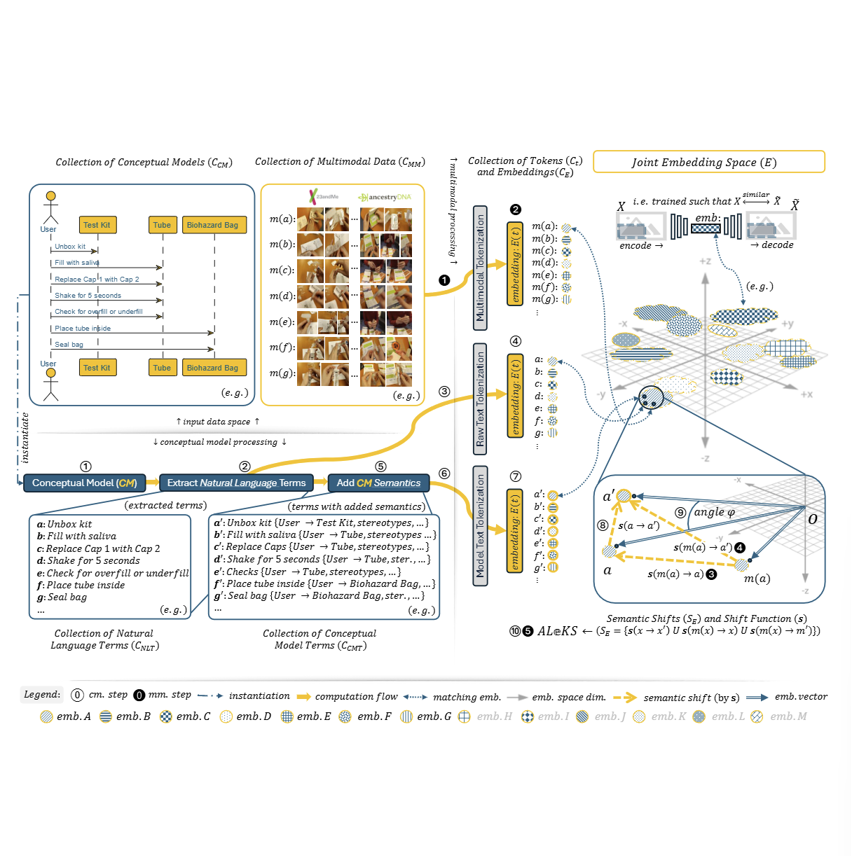

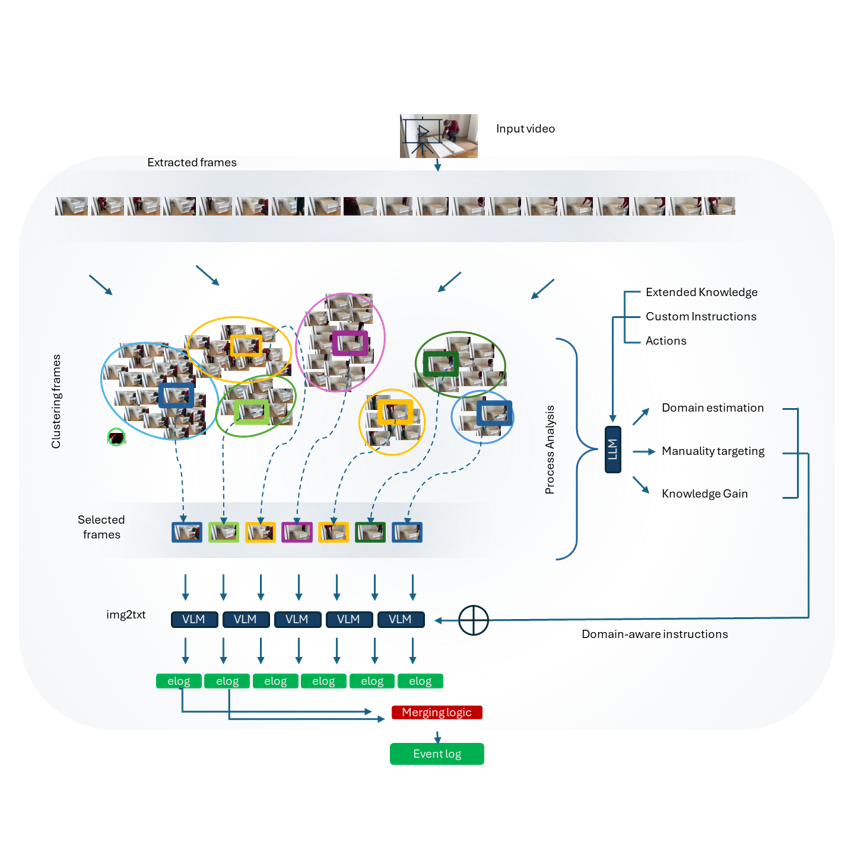

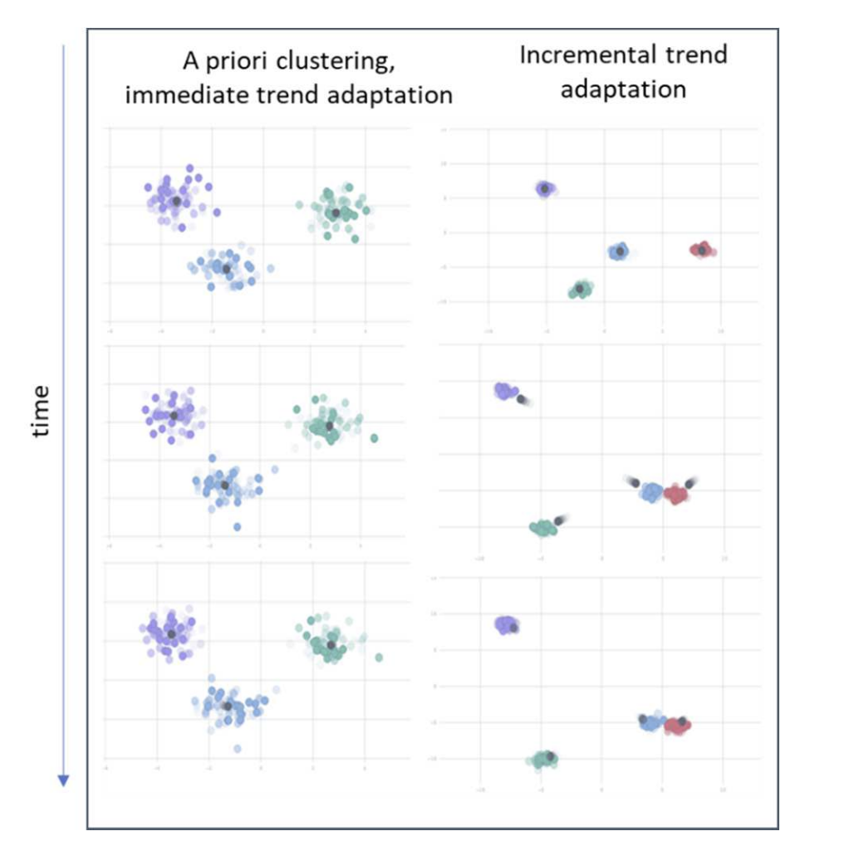

Multimodal Process Mining

Aleksandar Gavric, Dominik Bork, Henderik A. Proper CBI 2024 [BibTeX] Formulated multimodal process mining as a representation-learning task; developed unified embeddings combining video, audio, and UI interactions for robust discovery under ambiguity. |

|

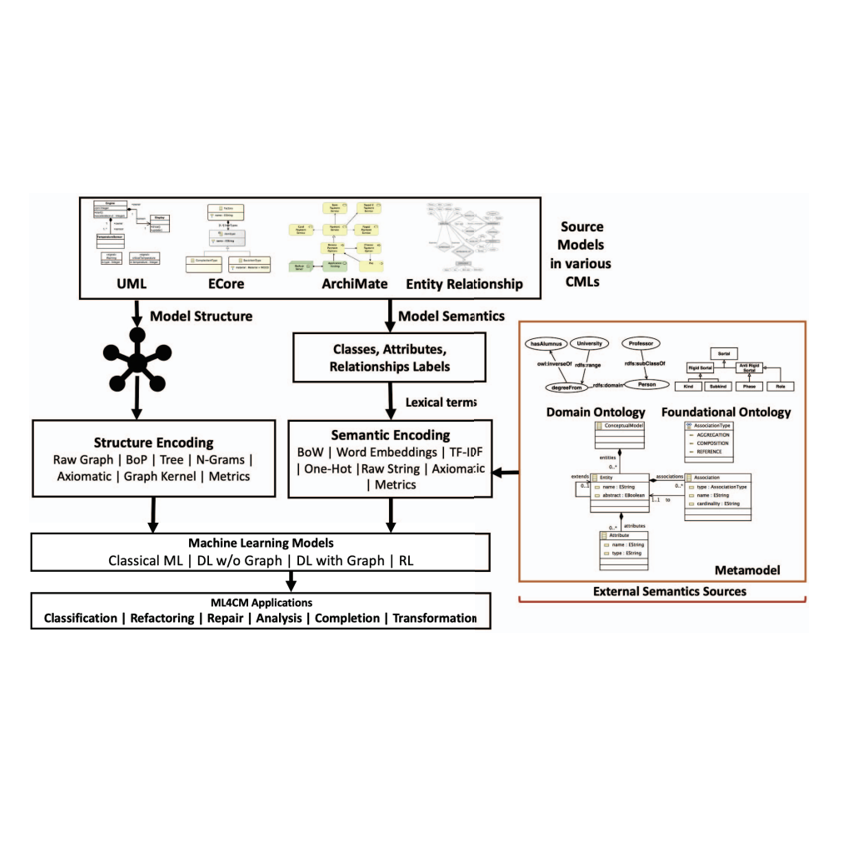

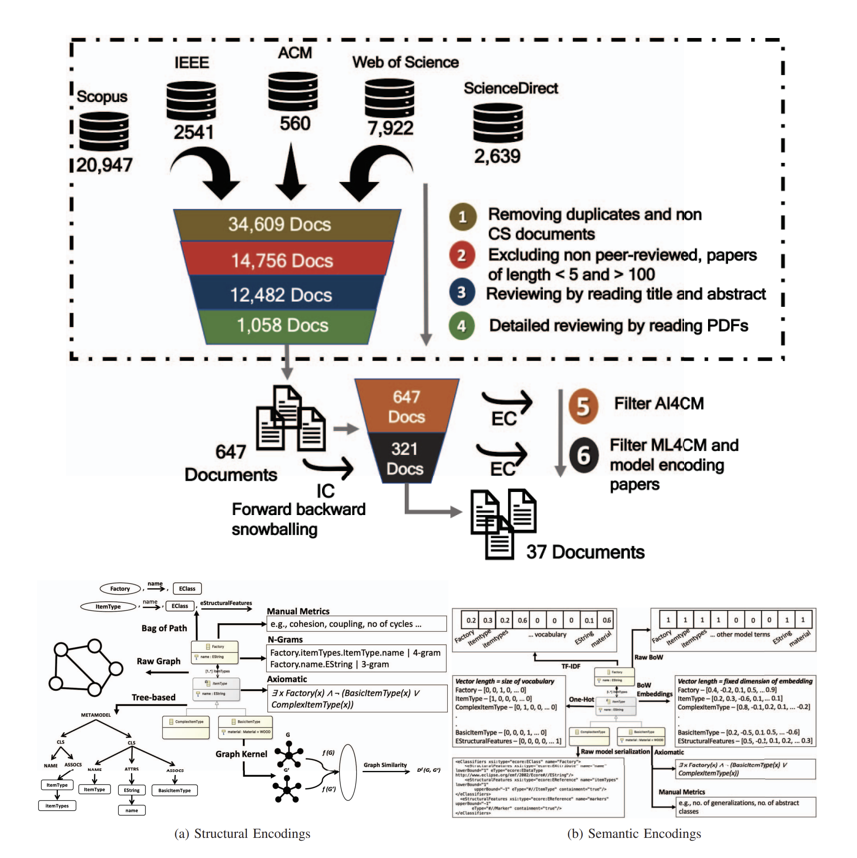

Encoding Conceptual Models for Machine Learning: A Systematic Review

Ali, S. J., Aleksandar Gavric, Henderik A. Proper, & Dominik Bork MODELS-C 2023 [BibTeX] Surveyed ML-ready encodings of BPMN/UML/Petri nets; categorized graph-neural, image-based, and text-based approaches; highlighted open challenges in multimodal interoperability. |

|

Enhancing process understanding through multimodal data analysis and extended reality

Aleksandar Gavric PoEM / EDOC 2023 Companion Proceedings [BibTeX] Demonstrated XR-based reenactments for generating high-quality multimodal logs; evaluated video-driven activity recognition for process discovery. |

|

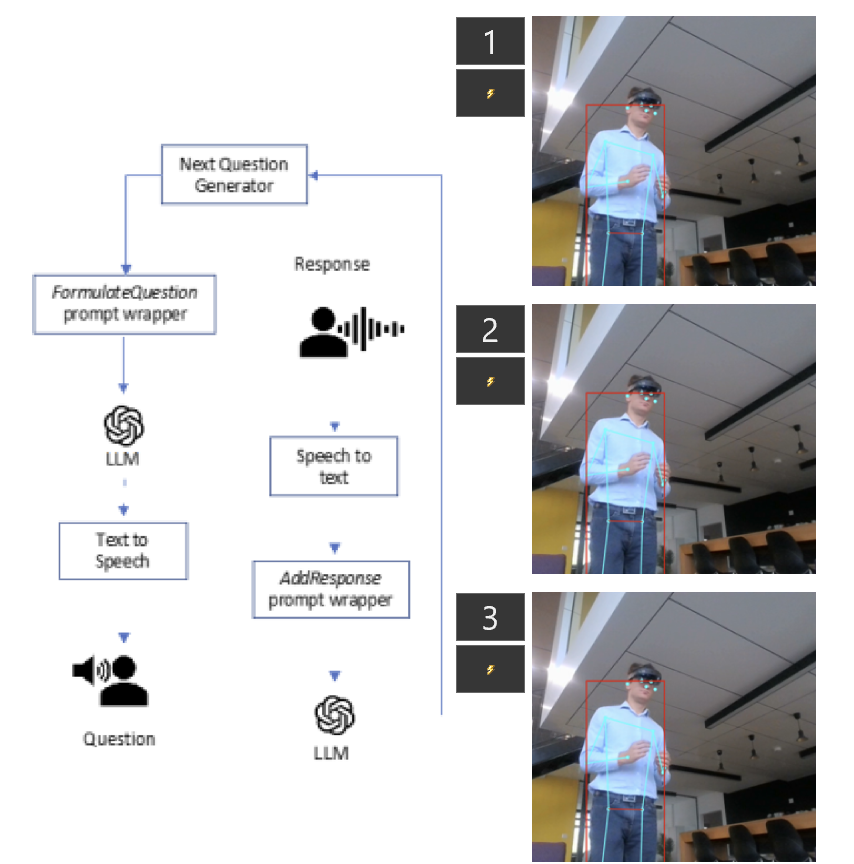

A System for Detection and Tracking of Oculo-Vestibular Complications Associated with Extended Reality Headset Usage

Aleksandar Gavric, Merlinsky, E. A., Aleksandar Stanimirović MIEL 2023, pp. 1–4. IEEE [BibTeX] Examined health issues associated with XR headset usage and introduced a system for detecting and monitoring ocular and vestibular complications, including actionable guidance for prevention. |

|

Physics-Driven Methods for Adaptive Optics Effect in Extended Reality

Merlinsky, E. A., Aleksandar Gavric, Stojković, H., Živanović, E. MIEL 2023, pp. 1–4. IEEE [BibTeX] Surveyed how adaptive optics, eye-tracking, and light-field display physics can enable immersive XR experiences without traditional corrective lenses. |

|

Real-Time Data Processing Techniques for a Scalable Spatial and Temporal Dimension Reduction

Aleksandar Gavric, Vujošević, D., Radosavljević, N., & Prvulović, P. INFOTEH 2022 [BibTeX] Showed experimentally how spatial and temporal dimension reduction of sensor streams can lead to more successful predictive models in different applications. |

|

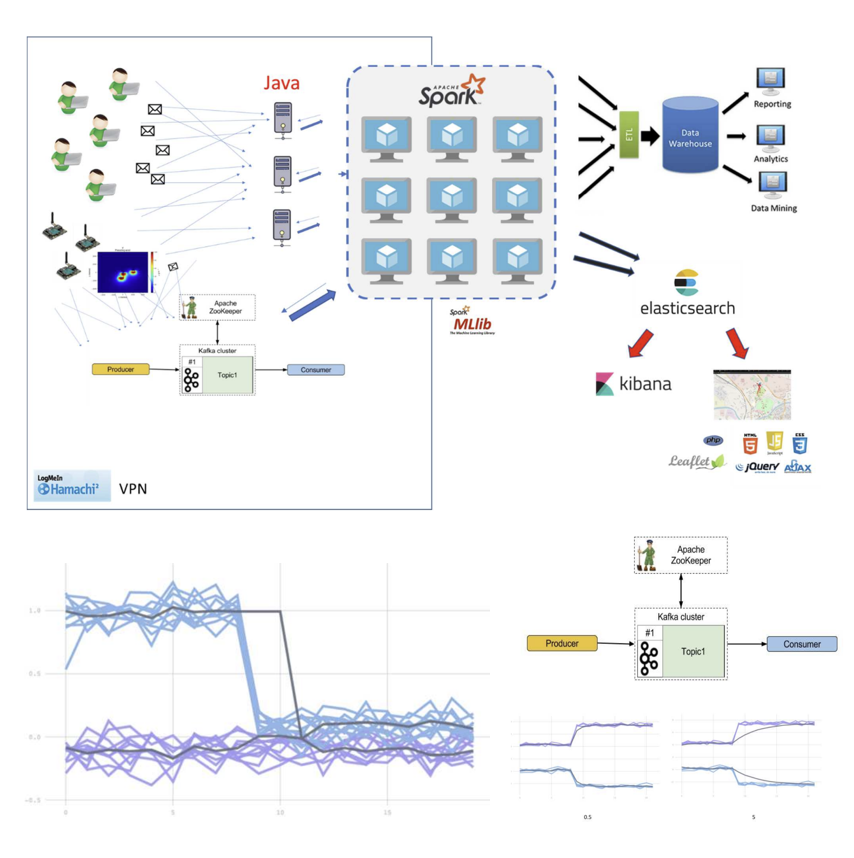

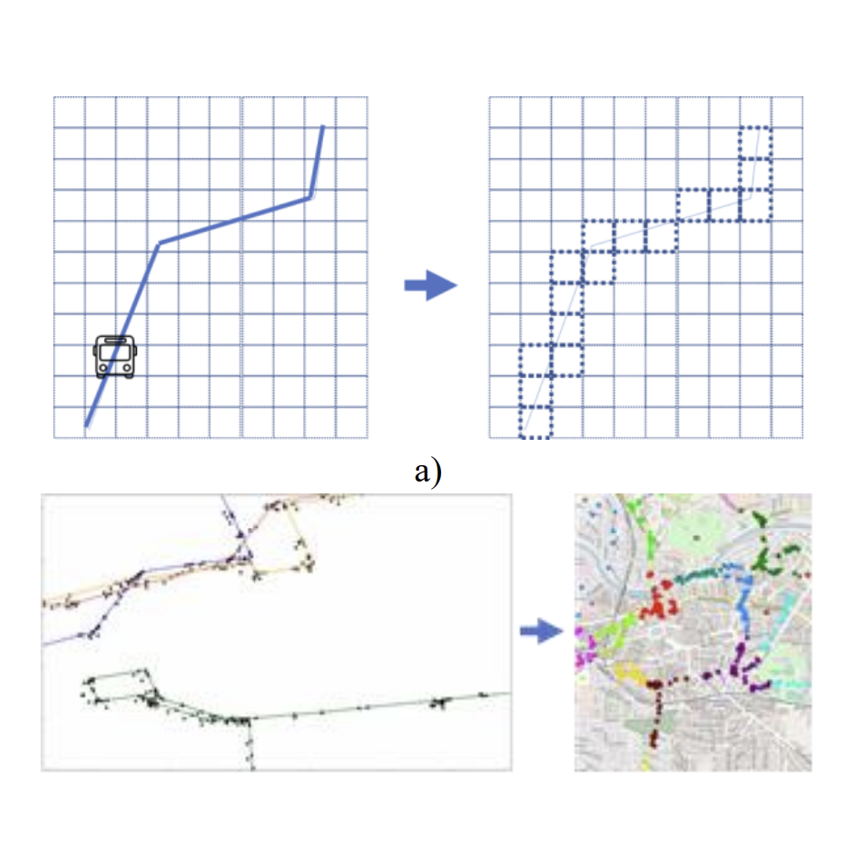

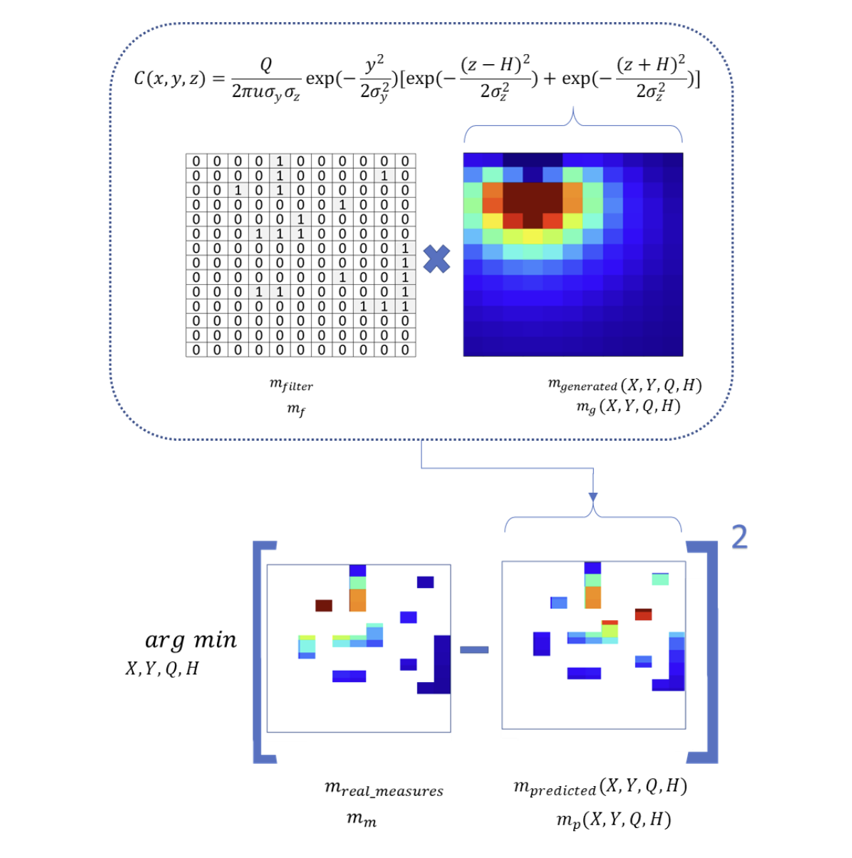

Identification of Air Pollution Sources using Predictive Models and Vehicular Sensor Networks

Aleksandar Gavric, Stanimirović, A., & Stoimenov, L. ICIST 2021 [BibTeX] Designed and implemented a predictive ML model for a distributed system applying machine learning on data streams to estimate dominant pollution sources in real time. |

Miscellanea |

|

Website template basend on ✩. |